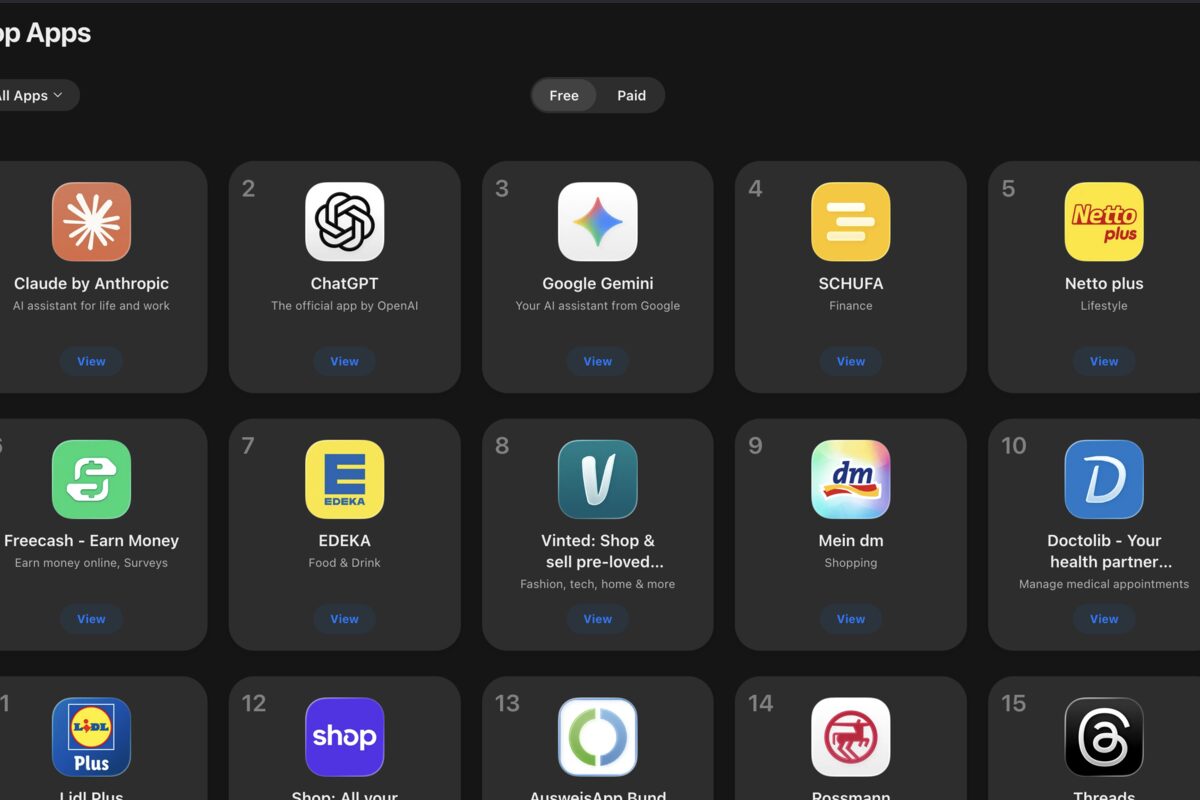

Your app has 200 five-star reviews. Your competitor has 50 five-star reviews. You're ranking lower than them in search results. Why?

Because Apple and Google don't just care about the star rating. They care about recency. They care about velocity. They care about how fresh your feedback is.

Review signals drive app store ranking more directly than almost anything else you can optimize. But the signal is nuanced, and most developers get it wrong.

Let me walk you through what the data actually shows about how reviews impact ASO, and what you should be doing about it.

Here's the dirty secret nobody talks about: Apple's algorithm cares way more about when you got reviews than how many you have.

A 3.8-star app with 50 reviews from the last month outranks a 4.6-star app with 200 reviews from six months ago. Every time.

The logic is sound: if users are actively leaving reviews, the app is actively being used and updated. An app with old reviews? It might be abandoned. It might be broken. The algorithm defaults to showing users something that's alive.

This is critical for your ASO strategy. Don't obsess over total review count. Obsess over review velocity. A steady stream of new reviews — even a mix of 4 and 5 stars — signals more than a stale backlog of perfect ratings.

Apple and Google use different signals. On Google Play, recency still dominates, but Google weights review volume higher than Apple does.

For Google Play ranking, the formula roughly breaks down like this: recent reviews > review volume > average rating. You can have a 4.1 star app and outrank a 4.5 star app if you're getting more recent reviews.

This creates an interesting dynamic: getting a steady stream of new reviews — even a few one and two-star ones — is better than stale perfection.

On iOS, the margin of improvement is smaller. But on Android, the effect is pronounced enough to plan around.

There's a cliff at 4.0 stars. Apps below 4.0 show a dramatic search ranking drop. Not because the algorithm hates 3.9 stars, but because users searching for "task management app" see the 3.9-star option and tap the 4.2-star option instead, and those user engagement signals feedback back into the algorithm.

Getting above 4.0 is important. Staying above 4.0 matters more. But going from 4.0 to 4.5? That matters less than recency.

Strategy: if you're below 4.0, fix it. Respond to negative reviews, address the problems, drive re-ratings. If you're above 4.0, focus on keeping reviews recent rather than obsessing over marginal rating improvements.

Let's say you shipped a major update. Feature parity with competitor A, but you had worse reviews. Five days after launch, you have 30 new reviews. Competitor A gets 5. You're now more visible in search despite having a lower lifetime rating.

The algorithm interprets velocity as a signal of relevance. New reviews = active development = user interest = show it more.

This is why launching new features and sending review prompts to your users works for ASO. It's not manipulative — it's literally how the algorithms work. Fresh reviews are a ranking signal.

This is the least-discussed SEO hack in app marketing.

When users write "This calendar app syncs perfectly with Google Calendar," that review text gets indexed by the app store search algorithm. The keywords in reviews contribute to your search ranking for terms like "calendar sync" or "Google Calendar integration."

You can't fake this. But you can encourage it. In your onboarding, in your support responses, in your feature announcements — use the exact language your target users would search for. If they use that language when they're happy, it goes into their review, and suddenly you're ranking for that term.

This is why reviews for apps like task managers often mention specific competitors by name. Users write "better than Todoist" or "alternative to Evernote." That text is searchable. The app now ranks for that comparison.

Responding to reviews doesn't change the star rating, but it changes conversion. Here's what the data shows:

Users read reviews AND developer responses before downloading. A one-star review with a thoughtful developer response converts better than an un-responded-to five-star review. The response signals that someone is home, the app is maintained, problems get fixed.

On Apple App Store, there's no direct ranking algorithm boost for response rate. On Google Play, there is a documented boost — apps with high response rates rank higher, all else equal.

Beyond the algorithm: responding fast to reviews drives re-rating. Someone left you a one-star review because of a bug. You fix the bug and respond within 24 hours. They see your response, update the app, and change their rating to four stars. Now your average goes up AND your recency signal improves.

When you ask users for reviews matters.

Asking after a major feature launch? Perfect. Users are engaged, happy, review velocity spikes, ranking improves.

Asking users constantly or randomly? Bad. Review quality drops, you get more negative reviews from annoyed users, your average rating declines. The algorithm sees volatile rating patterns and deprioritizes your app.

The best time to prompt for reviews: after a core feature completes successfully. User finishes their first task in your app. User saves their first document. User completes their first workout. That's when they're happiest and most likely to leave a quality review.

This sounds counterintuitive, but here's the truth: a one-star review with a legitimate complaint is better for your ASO than a fake five-star review.

The algorithms detect fake reviews and deprioritize them. They can smell review pods, incentivized reviews, and review farms from a mile away. Getting caught inflating your ratings tanks you harder than having a lower honest rating.

A genuine negative review shows authenticity. Real users, real feedback, real engagement. As long as your average stays above 4.0 and you're getting fresh reviews regularly, that one-star review is just noise.

Plus, when you respond to the one-star review, cite it as a public sign that you care. Now potential users see: bad review → developer fixed it → new review is better. That narrative converts.

Month one: Get above 4.0 stars. Ruthlessly respond to every negative review. Fix the top three bugs people mention. Ship an update. Ask satisfied users for reviews after they complete core actions.

Month two onward: Maintain review velocity. Every time you ship a feature, send a version update notification. Happy users will leave new reviews. Respond to everything within 24 hours.

Never: Buy reviews, join review pods, or incentivize reviews in violation of app store rules. You'll get caught and it will destroy your rankings permanently.

Always: Use user language in your feature descriptions and copy. When users see features described the way they think about them, they'll mention those terms in reviews, you'll rank for those keywords.

This is how top indie apps stay on top. It's not luck. It's not marketing spend. It's a feedback loop: new feature → users leave reviews → app ranks higher → more new users find it → more reviews → better visibility.

I've written before about improving your app rating. That's half the equation. The other half is making sure reviews drive discovery.

When I analyzed what 10,000 reviews taught me, one pattern was unmistakable: apps that stay visible are apps with fresh review signals. Not necessarily the highest-rated — the most active.

Boost your ASO with consistent review management. AppTriage's review tracker auto-imports new reviews and lets you reply directly — keeping your response rate high and your ranking climbing. Try free.